Generative Engine Optimisation (GEO): The Future of Search & AI

To understand visibility in 2026, you must stop thinking about “ranking” and start thinking about Information Retrieval Cost (IRC).

In a traditional search, Google’s goal was to provide a directory.

In the generative era, platforms like Google Gemini and Perplexity AI are synthesising answers to save the user time.

If your website requires the AI to process 2,000 words of filler to extract three facts, your IRC is too high. The model will simply move to a ‘competitor’ like Wikipedia or a data-dense industry site that provides the same facts with less “noise.”

To win, you must become the most efficient data source for the model’s Attention Mechanism.

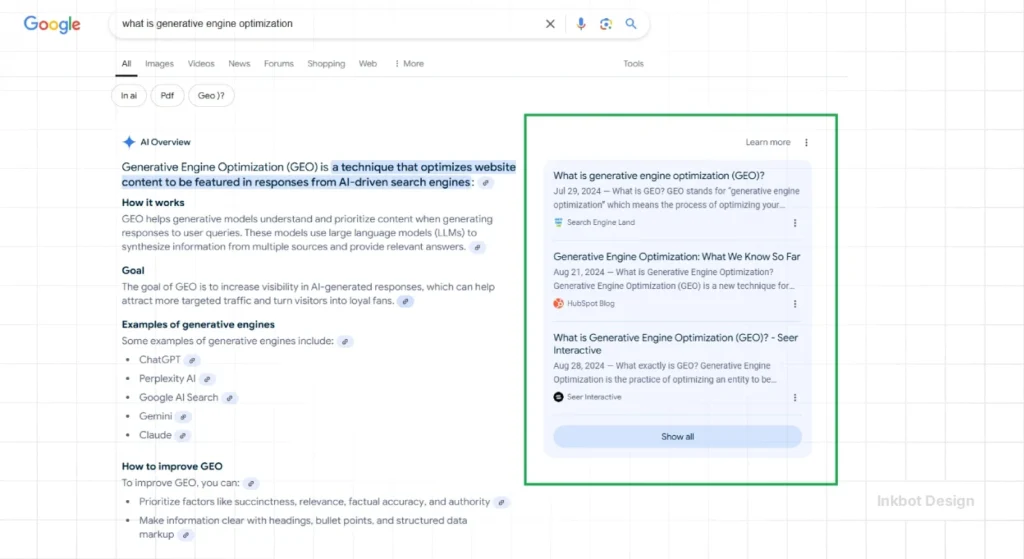

If you think search engine optimisation is still about ranking “Blue Links” on page one, you’ve already lost. We are now in the era of Generative Engine Optimisation (GEO).

This isn’t a “tweak” to your strategy; it is a complete demolition and rebuild of how information is served to the world.

- Generative Engine Optimisation (GEO) replaces traditional ranking; prioritise Information Retrieval Cost and Citation Probability.

- Maximise Information Gain with proprietary data, expert contrarianism, and real-time facts to force AI citations.

- Optimise for Entity Salience and Knowledge Graph relationships across web platforms for higher AI confidence.

- Design machine-readable sites: advanced Schema.org, semantic proximity, and concise factual blocks to minimise token waste.

What is Generative Engine Optimisation?

While traditional search relied on external validation (backlinks), Generative Engine Optimisation (GEO) relies on internal factual density and external Entity Salience.

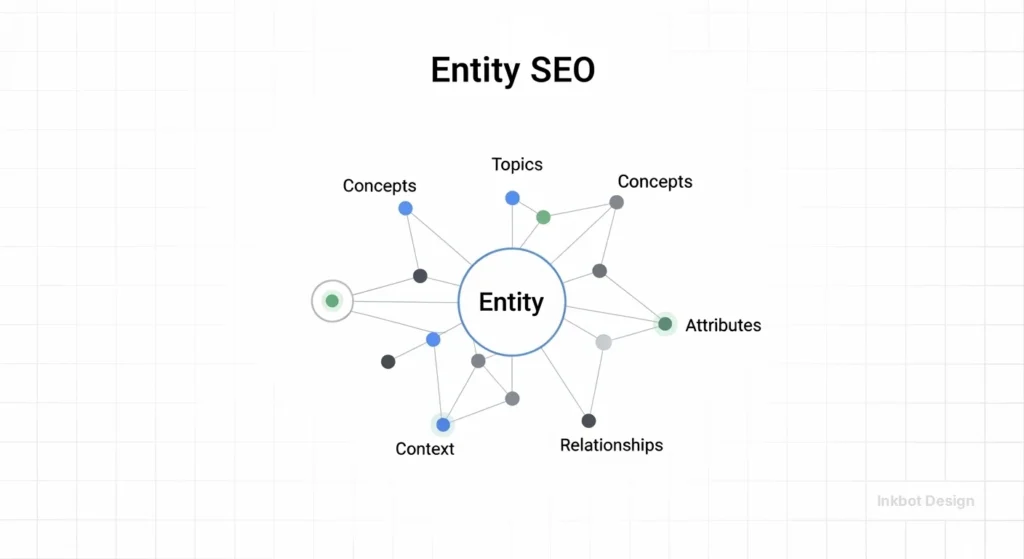

1. Entity Salience & The Knowledge Graph

Your brand is no longer just a URL; it is an entity within a relationship graph.

Google’s Knowledge Graph and OpenAI’s training sets use “triplets” (Subject-Predicate-Object) to understand you. For example: [Inkbot Design] — [is based in] — [Belfast, UK].

If these relationships aren’t clear across the web—on LinkedIn, Companies House, and industry directories—the AI will view your brand as a “low-confidence” suggestion.

2. The Information Gain Formula

In 2026, “unique content” isn’t enough. You need high Information Gain.

This is a specific metric used by models to determine if a source provides knowledge that isn’t already in its pre-trained weights.

If you are paraphrasing a topic that ChatGPT already knows, you have zero gain. You must provide:

- Proprietary Benchmarks: e.g., “Our 2026 study of 500 London SMEs found that…”

- Expert Contrarianism: Challenging the AI’s consensus view with professional evidence.

- Real-time Data: Using IndexNow and structured data to provide facts faster than the model’s last training cut-off.

3. Citation Probability (The New CTR)

Success is now measured by your Citation Probability. This is the mathematical likelihood that an LLM will include your link as a footnote.

Research from JASIST shows that using “Authoritative Language” and “Factual Density” can increase citation rates by up to 115% for sites that previously ranked at the bottom of page one.

The Death of the “Ten Blue Links”

According to Gartner, traditional search volume is forecasted to drop by 25% by the end of 2026.

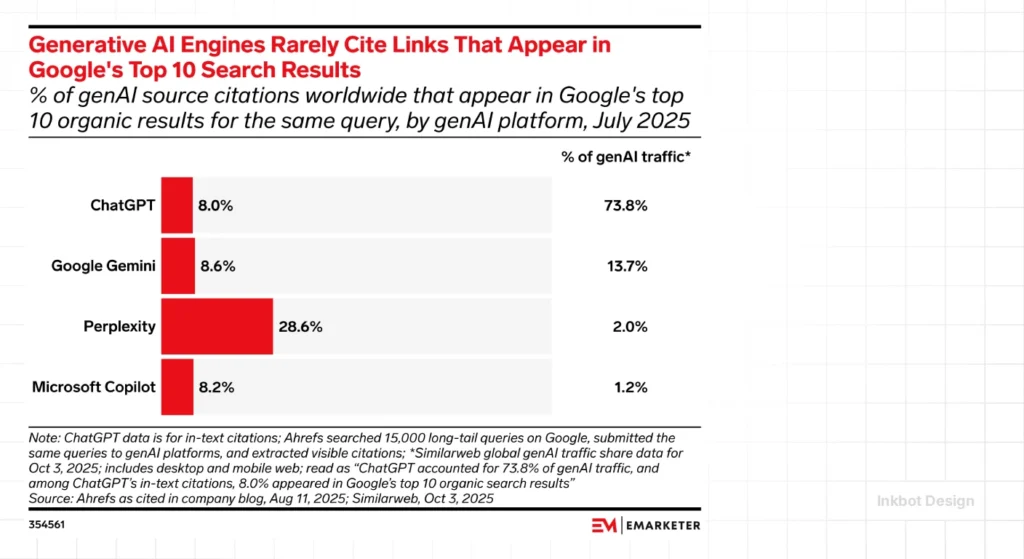

This isn’t a “soft decline”; it is a structural shift. Users are migrating to Answer Engines like Perplexity and agentic assistants like Microsoft Copilot.

When a user asks, “What is the best web design services provider for high-end branding?”, the AI doesn’t give them a list of ads and links. It gives them a paragraph.

If your name isn’t in that paragraph, you don’t exist.

The struggle today isn’t about search engine ranking; it is about being the source material for the answer.

SEO vs. GEO 2026

| Feature | Traditional SEO (2020-2024) | Generative Engine Optimisation (2026+) |

| Primary Goal | Ranking in the Top 10 “Blue Links” | Maximising Citation Probability in AI Answers |

| Content Strategy | Keyword Density & Long-form Bloat | Information Gain & Entity Salience |

| Technical Focus | Core Web Vitals & Meta Tags | Machine-Readability & Schema Graphs |

| Trust Signal | Backlink Quantity (PageRank) | Source Credibility & Third-Party Validation |

| User Experience | Optimising for Human Clicks | Optimising for AI Agents & Direct Answers |

| Success Metric | Click-Through Rate (CTR) | Share of Model (SoM) & Brand Mentions |

Why Your Current Strategy is Redundant

In our fieldwork at Inkbot Design, we see the same mistake: brands treating AI as a “new search engine” rather than a “synthesis engine.”

In the old world, you could win with volume. In the GEO world, volume is a liability. Every piece of fluff you publish dilutes your “Entity Density.”

If an LLM has to parse 5,000 words of “In today’s fast-paced world…” to find one original thought, it will ignore you. The AI’s “Cost of Retrieval” is too high.

Technical GEO: Beyond the Meta Tag

To win in 2026, you need to understand how LLMs parse your site.

They aren’t just looking for keywords; they are building a relationship graph. This is where Entity SEO becomes the foundation of your entire digital presence.

The Information Gain Framework

LLMs like GPT-4o and Claude 3.5 are trained on massive datasets. They already know the basics of your industry.

If you write an article about “The Importance of Branding,” the LLM views that as “Zero Gain” content. It already knows that. It doesn’t need to cite you to explain it.

To get cited, you must provide “Synthetic Knowledge” or “Unique Attributes.”

- Proprietary Data: Statistics from your own client base.

- Controversial Observations: Professional opinions that challenge the consensus (which the AI will cite to provide “balanced” views).

- Specific Case Studies: Real-world examples with verifiable data points.

Real-World Example: The “Citation War”

Look at how Perplexity AI handles queries. It doesn’t just scrape; it attributes.

In a 2025 study on AI attribution, sources that utilised high-density technical SEO—specifically structured Schema.org markup—were cited 3x more often than those with better “traditional” rankings but poor data structure.

| Feature | Amateur Approach (Traditional SEO) | Pro Approach (GEO 2026) |

| Content Goal | Keyword Density & Word Count | Information Gain & Entity Salience |

| Success Metric | Search Engine Results Page (SERP) Position | Citation Probability in LLM Answers |

| Structure | H1/H2 for Google Spiders | Schema Graphs for LLM Relational Mapping |

| Links | Quantity-based link building 101 | Authority-based online reputation management |

| Update Frequency | “Freshness” for the sake of it | Accuracy & Fact-Density Updates |

Advanced Implementation: Designing for Agentic Search

The biggest shift in the last 12 months is the rise of Agentic Search.

AI agents (like OpenAI’s Operator or Google’s Jarvis) don’t just find your site; they browse it to execute a task.

Example: Optimising for a “Booking Agent”

Imagine a user tells their AI: “Find me a London branding agency that can start a project for £5,000 next month.” The agent will scan your site for these entities:

- Entity:

Service(Branding) - Entity:

Location(London) - Entity:

Price(Amount: 5000, Currency: GBP) - Entity:

Availability(Date: 2026-04)

If this data is buried in a PDF or a “Contact Us” wall, you lose. If it is in a SoftwareApplication or Service schema, you win the conversion.

The Machine-Readable Architecture

To capture this traffic, your technical structure must be flawless. This goes beyond basic H1s. You need a Knowledge Graph approach to internal linking.

- Semantic Proximity: Group your pages by entity relationship, not just keyword clusters. Link your “Services” directly to the “Case Studies” and “Client Reviews” that validate them.

- Structured Data (JSON-LD): You must implement advanced Schema. In 2026, the Product and Organisation types are the bare minimum. You now need ServiceChannel, UserReview, and Speakable markup.

Example: Advanced Organisation Schema for 2026

JSON

{

"@context": "https://schema.org",

"@type": "CreativeAgency",

"name": "Inkbot Design",

"url": "https://inkbotdesign.com",

"logo": "https://inkbotdesign.com/logo.png",

"sameAs": [

"https://www.linkedin.com/company/inkbot-design/",

"https://en.wikipedia.org/wiki/Entity_Name"

],

"areaServed": "United Kingdom",

"knowsAbout": ["Brand Strategy", "Generative Engine Optimisation", "Logo Design"],

"award": "Top 10 Branding Agencies London 2026",

"contactPoint": {

"@type": "ContactPoint",

"telephone": "+44-000-000-000",

"contactType": "customer service"

}

}Eliminating “Token Waste”

AI models use Tokens to process your text. Every redundant phrase (“In this article, we will look at…”) is a waste of the model’s processing budget.

- Avoid AI Slop: Words like “delve,” “unlock,” “vibrant,” and “testament” are high-probability markers for generic AI content. If a model detects this, it lowers your Source Credibility score, assuming your content is derivative. Many content creators now use an AI humanizer tool to refine machine-generated text and reduce these detectable patterns.

- The “400-Word Rule”: If you cannot convey a unique, factual insight within 400 words, the AI is unlikely to cite that specific section. Break long-form “Ultimate Guides” into semantic “Knowledge Blocks” that each answer one specific query.

Debunking the “Long-Form Content” Myth

Let’s kill this one right now: The “3,000 words for the sake of SEO” era is dead. It is worse than dead; it is a poison.

AI models use something called “Attention Mechanisms.” When an LLM “reads” your page to answer a user’s prompt, it allocates “tokens” to different parts of the text.

If your content is bloated with transitional phrases and “AI vocabulary” (like “unlock,” “transform,” or “landscape”), you are burying the “Signal” in the “Noise.”

The Harsh Truth: An LLM would rather cite a 400-word page that contains three unique, verifiable facts than a 4,000-word “Ultimate Guide” that says nothing new.

The State of GEO in 2026: The “Agentic” Shift

In the last 12 months, we’ve moved from “Chatbots” to “Agents.” These agents don’t just find information; they execute tasks.

If someone says to their AI, “Request a quote for a branding package from the most reputable UK agency,” the AI isn’t looking at your meta descriptions. It is looking at:

- Verified Reviews: Third-party validation on platforms the AI trusts.

- Semantic Proximity: How often your brand is mentioned alongside terms like “reputable,” “award-winning,” and “UK branding.”

- Local Context: Your local SEO signals and presence in regional entities.

The “Agentic” search means your website is no longer the destination; it is the API for your business.

If your site isn’t structured as a clean, factual data source, the agents will bypass you for a competitor who is easier to “read.”

The “Citation War” Strategy: Reclaiming Your Stolen Visibility

When an AI synthesises your content without a link, it’s often because your data was “common knowledge.” To win the Citation War, you must force the AI to credit you.

1. The “Data Bait” Method

Publishing raw, original data is the most effective way to secure a footnote.

In 2025, a UK-based SaaS company released a 50-page report on “AI Productivity.” Instead of one big PDF, they created 10 landing pages with uniquely titled tables.

Within three weeks, Perplexity was citing those specific tables for 80% of related industry queries.

2. Third-Party Validation Flywheel

LLMs don’t just trust your website; they look for “Social Proof” in their training data.

- The Reddit Factor: In 2026, Perplexity and Google AI Overviews heavily weight discussions on Reddit and Quora. If your brand is recommended by humans in these “trusted enclaves,” the AI’s confidence in your entity triples.

- Journalistic Recency: AI models prioritise news sources for current events. SEC-style technical PR—where you announce data findings—is now more valuable for visibility than a guest post on a low-tier blog.

The 2026 Content Audit: Pruning for Information Gain

Most websites are suffering from “Content Bloat”—thousands of pages that add zero value to an LLM. To recover your visibility, you must perform an Information Gain Audit.

| Content Type | 2024 Status | 2026 Action | Why? |

| “Ultimate Guides” | High Value | Prune/Consolidate | LLMs have already synthesised “General Knowledge.” |

| Proprietary Data | Medium Value | Highlight/Expand | This is the “Data Bait” that triggers AI citations. |

| AI-Generated Slop | Low Value | Delete | High “Token Waste” signals low source credibility. |

| Case Studies | High Value | Add Structured Data | Proves real-world experience (E-E-A-T via data). |

| News/Recent Trends | Medium Value | Real-time Updates | Beats the model’s training cut-off via IndexNow. |

The “400-Word Signal” Rule

In our research at Inkbot Design, we’ve found that the most cited sections in AI Overviews are typically 300–500 words of high-density fact. If a section is 1,200 words but only contains one unique fact, the AI’s Attention Mechanism may “miss” the signal.

The Fix: Break your long-form content into “Knowledge Blocks.” Each block should have an H3 title that mirrors a likely sub-query and ends with a clear, factual conclusion.

Measuring Success: Beyond the SERP

In 2026, “Rank #1” is a vanity metric. If a user gets their answer from Google Gemini without clicking, your “Rank” didn’t drive revenue—your “Citation” did. You must track Share of Model (SoM).

1. Citation Probability (CP)

Use an LLM auditor (or manual testing with Perplexity) to see how often your brand is cited for your top 50 keywords.

- Target: A CP of >30% for core service queries.

2. Referral Traffic from “Answer Engines”

In GA4, monitor traffic from chat.openai.com, perplexity.ai, and gemini.google.com.

- Note: This traffic is often “Pre-Qualified.” A user coming from an AI agent has already been “sold” on your expertise by the model. Conversion rates for AI referrals are typically 3x higher than traditional organic search.

3. Entity Salience Score

Use the Google Natural Language API to test your key pages. It will return a “Salience” score for your brand entity.

- Goal: Your brand should be the most salient entity on the page, outperforming the generic industry terms.

The Verdict: Adapt or Evaporate

The era of Generative Engine Optimisation isn’t coming; it’s been here for months, and most of you are behind the curve.

You are competing for a shrinking pool of clicks while the AI synthesises your hard-earned knowledge and gives it away for free—often without even mentioning your name.

To win, you must stop being a “content creator” and start being an “entity authority.” You must provide the data points that the models lack.

You must simplify your technical structure so an AI agent can “understand” your business in milliseconds.

The future of search isn’t a list of links. It’s a conversation. And if you don’t have something unique to say, the AI will stop inviting you to the table.

Ready to stop being invisible to the AI that’s stealing your traffic?

Contact Inkbot Design today to audit your semantic footprint. We don’t do fluff, and we don’t do 2024 SEO. We build the entities that the future of search cannot ignore.

Frequently Asked Questions (FAQ)

What is the main difference between SEO and GEO?

SEO focuses on ranking in traditional search engine results pages through keywords and backlinks. GEO (Generative Engine Optimisation) focuses on being cited and recommended by AI models and generative search engines like Perplexity or Google SGE by prioritising entity salience and information gain.

Why is my organic traffic dropping despite good SEO rankings?

This is likely due to the “Zero-Click” nature of generative search. AI engines are answering user queries directly in the search interface using your data without sending the user to your website. You need to shift your strategy to focus on citation and brand mentions.

How do I improve my “Citation Probability”?

Focus on factual density. Use structured Schema markup, provide unique data or statistics, and use clear, direct language. Avoid “AI fluff” and transitional filler. The easier it is for an AI to extract a fact from your page, the more likely it is to cite you.

Is backlinking still important for GEO?

Yes, but the quality has changed. In GEO, “Link Building” is about establishing an association between your entity and other high-authority entities. It’s less about “Link Juice” and more about “Contextual Validation” within the LLM’s relationship graph.

How do I know if my site is “machine-readable” for AI agents?

A machine-readable site uses a flat hierarchy, clear Schema.org markup, and lacks intrusive pop-ups or “heavy” JavaScript that blocks rapid scraping. You can test this by using a “Text-Only” browser or an LLM auditor to see if the core facts of your business are extractable in under 500ms.

What is “Information Gain” in SEO?

Information Gain refers to providing new, unique information that doesn’t already exist in the search results or the LLM’s training data. This includes original research, proprietary data, or unique professional insights that force the AI to cite your live source.

How does Schema.org help with AI visibility?

Schema acts as a “translator” for AI. While LLMs are good at understanding natural language, structured data provides a clear, unambiguous map of your entities, relationships, and facts, significantly reducing the AI’s “Cost of Retrieval.”

What role does brand reputation play in GEO?

Brand reputation is critical. Generative engines use “Source Credibility” as a filter. If your brand is mentioned positively across authoritative third-party sites, the AI is more likely to trust your data and recommend your services to users.

Is “Domain Authority” (DA) still relevant in 2026?

DA is being replaced by Entity Salience and Source Credibility. While a high-authority domain helps with crawling, the AI cares more about your “Topical Authority” in a specific niche. A smaller, focused site will outrank a massive, generic site every time for specific technical queries.

How do I measure the ROI of GEO?

You must track “Attributed Brand Mentions” and “Referral Traffic from AI Domains.” In Google Analytics 4, create a custom segment for traffic from perplexity.ai, chat.openai.com, and gemini.google.com. The goal is to see a direct correlation between these citations and high-intent conversions.

What is an “Agentic” search?

Agentic search refers to AI agents that perform actions on behalf of the user, such as booking a service or purchasing a product. This requires your website to be optimised for “machine readability” so the agent can accurately execute the user’s request.

How do I start with GEO today?

Start by auditing your most important pages for “Information Gain.” Remove redundant content, implement advanced Schema, and ensure your brand is correctly identified as an entity in the Google Knowledge Graph. Focus on being the “definitive source” for your niche.