Multimodal Creative Strategy: Text, Image, & Voice

If your brand’s visual cues don’t match its linguistic patterns, and those patterns don’t translate into an audible persona, you are creating friction.

Friction kills conversions.

In 2026, the stakes are higher because humans aren’t the only ones judging you.

Large Language Models (LLMs) and Generative Engines are now the primary gatekeepers of your audience.

If they can’t find a cohesive “entity” to index, you don’t exist.

This is where advertising design meets hard-nosed technical execution.

- Multimodal Creative Strategy: synchronise text, image, and voice so a single cohesive brand entity is indexable by AI agents.

- Semantic-First: define a precise lexicon and semantic core; every word must serve entity mapping and reduce vector dissonance.

- Visual and Aural Alignment: ensure images' latent vectors and SSML-coded voice prosody match textual claims to maximise Trust Score.

- Technical Implementation: embed Schema.org, image metadata, and SSML; use a Technical Creative Stack for cross-modal synchronisation and audits.

What is a Multimodal Creative Strategy?

Multimodal Creative Strategy is a technical framework for synchronising a brand’s identity across three primary sensory vectors: textual (written copy and semantic data), visual (static and motion imagery), and aural (voice and sonic branding).

It ensures that the brand “entity” remains consistent regardless of the medium or the AI interpreting it.

Key Components:

- Semantic Consistency: Ensuring the vocabulary and syntax used in text align with the brand’s core values.

- Visual Salience: Using visual hierarchy to guide attention in a way that reinforces the textual message.

- Aural Persona: Defining the specific phonetic and tonal characteristics of the brand’s synthetic voice.

How AI Agents “See” Your Brand in 2026

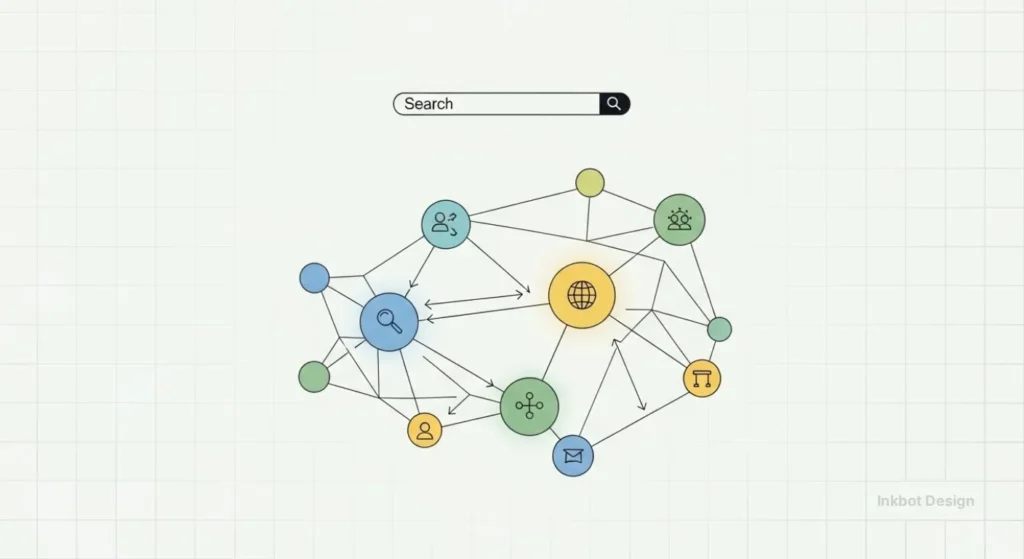

In 2026, the primary consumer of your content isn’t just a human scrolling on a phone; it is an Agentic AI—a system like OpenAI’s Operator or Google’s Gemini Agents—tasked with making decisions on behalf of the user.

These agents do not “read” your website; they ingest it into a high-dimensional vector space.

To an AI, your brand is a cluster of coordinates.

If your textual claims (e.g., “We are a high-security fintech”) are mathematically distant from your visual cues (e.g., casual, low-contrast stock photos), the agent detects Semantic Dissonance.

This lowers your “Probability of Recommendation.”

The Multimodal Integration Workflow:

- Step 1: Entity Grounding. Define your brand using Schema.org Organization and Brand types to link your text to a physical or legal entity.

- Step 2: Visual Vectorisation. Ensure every image contains embedded metadata that mirrors your core keywords. AI models now use CLIP (Contrastive Language-Image Pre-training) to check if an image of a “secure vault” actually aligns with the word “security.”

- Step 3: Aural Encoding. Using SSML 1.1 standards, define your brand’s “vocal metadata” so that voice-activated agents can accurately replicate your persona.

Example: A luxury automotive brand in 2026 uses a “Semantic-First” approach. Their text uses precise, technical engineering terms. Their images are high-contrast, representing “precision.” Their synthetic voice, generated via ElevenLabs Enterprise, uses a mid-range frequency with a controlled, “authoritative” tempo. The AI agent sees these three distinct signals as a single, high-confidence “Entity Cluster.”

The Textual Pillar: Beyond “Copywriting”

Text is no longer just for reading. It is for “feeding.”

In the current era of Generative Engine Optimisation (GEO), your text serves two masters: the human reader who wants a solution, and the LLM that needs to categorise your brand as an authority.

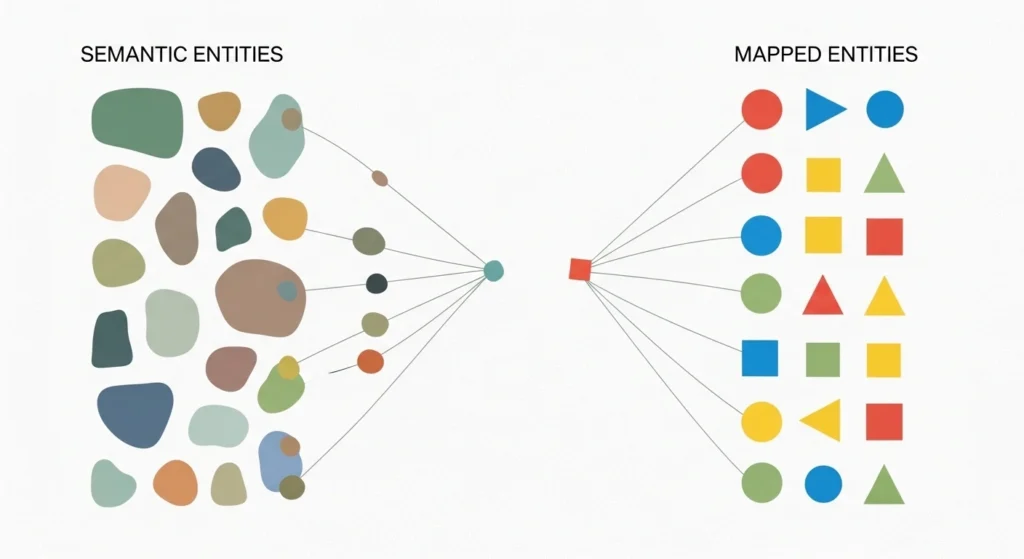

Semantic Entity Mapping

When we build a brand identity, we start with the lexicon. If you are a high-end consultancy, you don’t “help” clients; you “architect solutions” or “mitigate risk.”

This isn’t about being pretentious; it’s about semantic density.

Gartner data suggests that by the end of 2026, 25% of traditional search volume will have migrated to AI chatbots.

These chatbots rely on “entity associations.” If your text uses generic, low-value verbs, the AI associates you with low-value competitors.

The Death of “Fluff” Copywriting

I’ve audited hundreds of sites where the H1 is something like “We Bring Your Dreams to Life.” That is a waste of pixels.

It tells the user nothing and tells the AI even less. A multimodal approach demands that every word has a functional purpose.

Real-World Example:

Look at Apple. Their textual strategy is famously sparse. They don’t describe their products with adjectives; they use nouns that imply status.

They don’t say “The screen is very bright”; they say “Super Retina XDR.” They create new entities that they then own in the consumer’s mind and in the search engine’s index.

The Visual Pillar: More Than Just “Pretty”

Visuals are the fastest way to convey information, but most SMBs use them as “decoration.”

If your imagery doesn’t directly correlate with your textual claims, the brain experiences “cognitive dissonance.”

The Vectorisation of Style

In 2026, we look at images through the lens of “latent space.”

When an AI “sees” your website, it doesn’t see a “nice photo of a team”; it considers a collection of vectors representing lighting, composition, and colour theory.

If these vectors don’t match the “mood” of your display advertising, your brand authority takes a hit.

Visual Hierarchy and Conversion

A common mistake is burying the call-to-action design.

A professional multimodal strategy uses contrast and “eye-tracking paths” to ensure the visual narrative leads to a business outcome. If you are running pay-per-click advertising, the ad visual must be a 1:1 conceptual match to the landing page visual.

The Multimodal Creative Stack: Tools for 2026

Implementing this strategy requires more than just a creative suite; it requires a Technical Creative Stack that allows for cross-modal synchronisation.

| Category | Industry-Leading Tool | Multimodal Function | Ideal For |

| Linguistic | Claude | Developing brand lexicons and semantic maps. | Mid-market to Enterprise |

| Visual | Midjourney v7 / Adobe Firefly | Generating “Latent-Consistent” imagery via style-references. | Creative Agencies |

| Aural | ElevenLabs / Play.ht | Creating custom synthetic brand voices with specific prosody. | SMBs & YouTubers |

| Technical | Google Cloud TTS | Implementing SSML for precise voice-over control. | Technical Developers |

| Coordination | Brandfolder / Contentful | Managing “Multimodal Assets” with unified metadata. | Enterprise Marketing |

The Aural Pillar: The Sound of Authority

Voice is the most neglected aspect of creative strategy. With the explosion of smart speakers and AI voice-cloning, your brand needs a literal “voice.”

Sonic Branding

Think of the Netflix “Ta-dum” or the Intel bong. That is sonic branding. But in 2026, it goes deeper. It includes the “prosody”—the rhythm and intonation—of your customer service AI.

If your brand identity is “edgy and direct,” but your voice assistant is “polite and subservient,” you’ve broken the brand promise.

SSML and Brand Tone

We now use Speech Synthesis Markup Language (SSML) to hard-code brand personality into voice outputs. This allows us to control the pitch, rate, and volume of how a brand “speaks.”

Real-World Example:

Mastercard invested millions into a multi-sensory brand identity. They didn’t just design a logo; they created a melody that plays when you complete a transaction. This “audio receipt” provides a sense of security and completion that a visual-only interface cannot match. According to a study by the Ehrenberg-Bass Institute, distinctive brand assets that appeal to multiple senses are 3x more likely to be remembered.

A Step-by-Step Guide to SSML Implementation

While a “tone of voice” document is a start, 2026 demands that you “hard-code” your personality into the systems that speak for you.

Speech Synthesis Markup Language (SSML) is the XML-based standard for telling AI exactly how to pronounce your brand name, where to pause for dramatic effect, and which words to emphasise.

How to Implement a “Professional/Authoritative” Tone:

- Prosody Adjustment: Use the <prosody> tag to lower the pitch and slow the rate. A slower, deeper voice is biologically perceived as more authoritative.

- Emphasis Tags: Use <emphasis level=”moderate”> on key brand verbs (e.g., protect, innovate, solve).

- Phonetic Accuracy: Use the <phoneme> tag to ensure AI doesn’t mispronounce technical jargon or unique brand names.

Sample SSML Code for a 2026 Brand Greeting:

XML

<speak version="1.0" xmlns="http://www.w3.org/2001/10/synthesis" xml:lang="en-GB">

<voice name="en-GB-Neural-B">

<prosody rate="slow" pitch="-5%">

Welcome to <phoneme alphabet="ipa" ph="ˈfɪntɛk">FinTech</phoneme> Solutions.

<break time="500ms"/>

Where we <emphasis level="strong">architect</emphasis> your financial future.

</prosody>

</voice>

</speak>By implementing this at the API level for your customer service bots and video content, you ensure that your brand “sounds” the same whether a user is on your site or asking Siri for a recommendation.

The State of Multimodal Creative Strategy in 2026

The most significant shift in the last 18 months has been the “Multimodal Input” capability of AI models like GPT-5 and its successors.

These models can “see” an image and “hear” a voice simultaneously. This means that for the first time, an AI can judge your brand’s consistency just like a human does—but with perfect memory.

If your print ads use a different tone of voice than your TikTok captions, the AI will notice the discrepancy. This results in a lower “Trust Score” in Generative Search.

The “Trust Radius” of a brand in 2026 is built on the lack of contradiction across modalities.

The Myth: “Visual-First Branding”

Visuals are no longer the most crucial part of your brand.

The industry has lied to you for decades because selling “logos” is easy. But a logo is just a badge. In a world where 40% of users are using voice search to find local services, your logo is invisible.

The “Visual-First” approach is obsolete. It’s a legacy mindset from the era of billboards and magazines.

A modern, effective strategy is Semantic-First. You define the “Entity” (the meaning), and then you “render” it into text, images, and voice.

If you start with the visual, you are trying to build a house by picking the wallpaper before you’ve poured the foundation. Stop it. It’s expensive, it’s inefficient, and it makes you look like an amateur.

Dominating the “AI Overview” with Multimodal Signals

In 2026, traditional blue links are secondary. The AI Overview (or “AI Mode”) is the destination. These systems do not just aggregate text; they synthesise “Evidence.”

How Multimodal Consistency Influences AI Rankings:

- Information Gain: AI engines reward pages that provide unique, non-textual data. A custom-designed infographic that is appropriately labelled (via Schema.org ImageObject) provides higher “Information Gain” than 1,000 words of generic text.

- Cross-Modal Verification: If a Google AI agent finds a video where the transcript (Aural) perfectly matches the page copy (Textual) and the visual frames (Visual), it assigns a significantly higher Trust Score.

- The “Zero-Click” Strategy: By providing structured data for your voice and image assets, you ensure your brand is the “featured source” in voice search, even if the user never visits your website.

The Verdict

Multimodal Creative Strategy is not a “nice-to-have” design trend. It is a technical requirement for doing business in an AI-saturated market.

If your text, images, and voice are not pulling in the same direction, you are generating friction that will eventually bankrupt your marketing efforts.

You need to:

- Define your Semantic Core: What words does your brand own?

- Align your Visual Vectors: Does every image reinforce that core?

- Code your Aural Persona: How does your brand sound when it speaks?

Don’t be the business owner who spends a fortune on a “look” while ignoring the “feel” and the “sound.”

If you are serious about fixing your brand’s fragmented identity, explore our services and let’s get to work.

Frequently Asked Questions (FAQ)

Does a Multimodal Strategy help with AI Overviews?

Yes. AI systems like Gemini and SearchGPT prioritise content where the text, images, and video transcripts are semantically aligned. This consistency makes it easier for the AI to extract your brand as the “definitive answer” for a query.

What is “Vector Dissonance” in branding?

This is a technical term for when your brand’s assets are mathematically inconsistent. If your text is “luxury” but your images are “discount,” an AI’s vector embeddings will place them in different parts of its “knowledge graph,” leading to lower visibility in search results.

How do I start if I have zero budget for video or voice?

Start with Semantic-First text. Define your lexicon (the specific words you own). Then, ensure your alt-text and image captions use that exact lexicon. This costs nothing but time and provides the AI “sees” a connection between your text and images.

Can I use AI to automate my Multimodal Strategy?

Partially. You can use tools like Adobe Firefly to ensure visual consistency and ElevenLabs for voice, but the Semantic Core—the meaning and purpose of your brand—must be defined by a human. AI is the renderer, not the strategist.

How does this affect accessibility and WCAG 2026 standards?

Multimodal strategy is inherently accessible. By ensuring your message is conveyed through text, sight, and sound, you naturally meet WCAG 2.3 requirements. A well-implemented strategy ensures that a visually impaired user (hearing the voice) and a hard-of-hearing user (reading the text) receive the same brand experience.

How do I start a multimodal audit?

Start by documenting every sensory touchpoint: your website copy, social media images, YouTube videos, and customer service tone. Compare them side-by-side. If they don’t feel like they were created by the same person with the same goal, you have a fragmentation problem.

Does this strategy increase the cost of content creation?

Initially, yes, because it requires more planning and technical expertise. However, in the long run, it saves money by reducing “waste.” You stop creating content that doesn’t convert and start building a reusable “asset library” that is semantically aligned with your brand.

What role does AI play in Multimodal Creative Strategy?

AI is both the tool and the judge. We use AI to generate consistent visual styles and synthetic voices, but we also acknowledge that AI-driven search engines are the ones “grading” our consistency. It’s a closed-loop system that rewards technical precision.

Why is “Visual-First” branding considered a myth now?

Visuals are often the last thing a customer interacts with in a search-driven or voice-driven journey. If your “textual” or “aural” identity fails to grab attention or build trust, the customer will never even see your beautiful logo or website design.

How do “Vector Embeddings” relate to my brand?

In technical terms, AI sees your brand as a set of mathematical coordinates in “latent space.” A multimodal strategy ensures that those coordinates are tightly clustered. If your “text coordinates” are far away from your “image coordinates,” the AI sees you as “unclear” or “low authority.”

What is the difference between branding and Multimodal Creative Strategy?

Branding is the “what” and the “why”—your values and your look. Multimodal Creative Strategy is the “how”—the technical execution of those values across text, image, and voice to ensure maximum impact and zero friction in a modern digital environment.

How often should I update my multimodal strategy?

It should be reviewed annually. As AI capabilities evolve and consumer habits shift (e.g., the rise of new social platforms or hardware like AR glasses), the “technical” part of your strategy will need to adapt to remain effective.